0.1.46226.6 MB

unset

strict

core24

OpenMemory Snap.

A snap for OpenMemory,

OpenMemory is a local memory infrastructure powered by Mem0 that lets you carry your memory across any AI app. It provides a unified memory layer that stays with you, enabling agents and assistants to remember what matters across applications.

docs: https://docs.mem0.ai/openmemory/overview

OpenMemory can be utilized entirely locally by leveraging Ollama for both the embedding model and the language model (LLM).

Download: Ollama https://ollama.com/download

llm

embedder

The OpenMemory with Ollama is a private, local-first memory server that creates a shared, persistent memory layer for your MCP-compatible tools.

This runs entirely on your machine, enabling seamless context handoff across tools. Whether you're switching between development, planning, or debugging environments, your AI assistants can access relevant memory without needing repeated instructions.

Powered by

https://mem0.ai

https://ollama.com

https://qdrant.tech/

https://tauri.app/

OpenMemory is a local memory infrastructure powered by Mem0 that lets you carry your memory across any AI app. It provides a unified memory layer that stays with you, enabling agents and assistants to remember what matters across applications.

docs: https://docs.mem0.ai/openmemory/overview

OpenMemory can be utilized entirely locally by leveraging Ollama for both the embedding model and the language model (LLM).

Download: Ollama https://ollama.com/download

llm

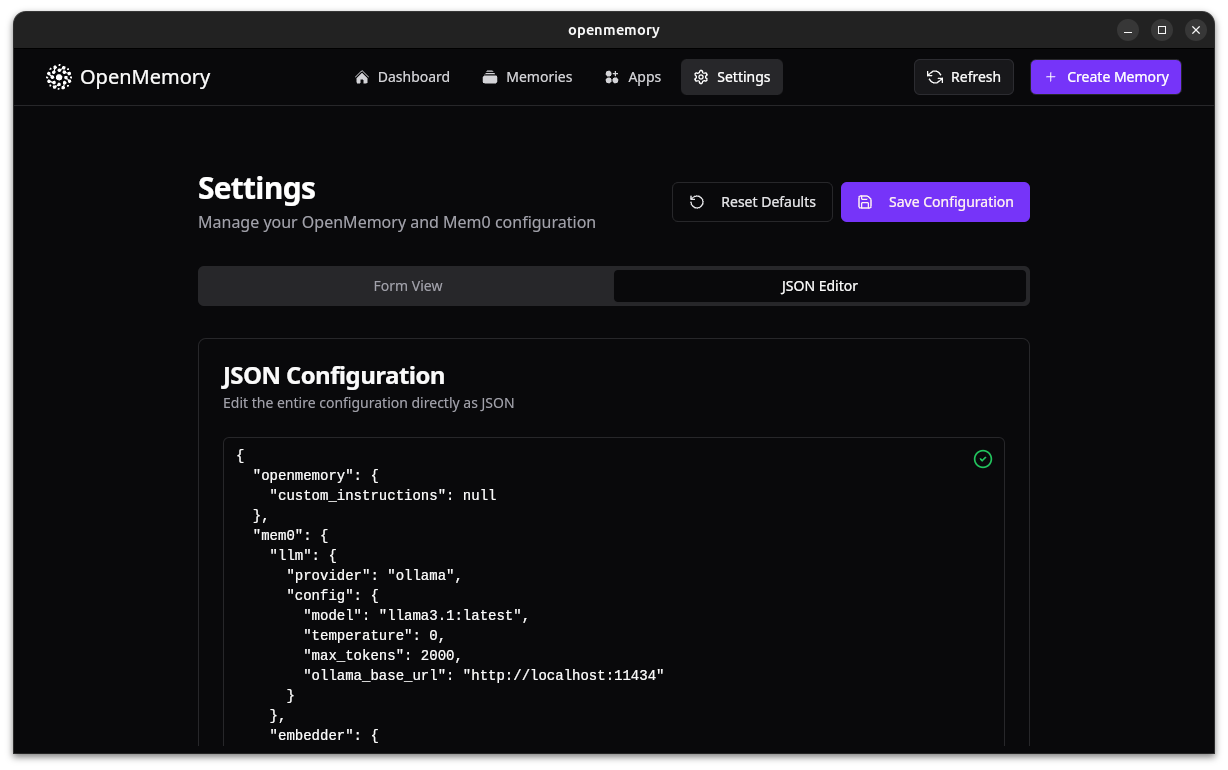

"provider": "ollama", "config": { "model": "llama3.1:latest", "temperature": 0, "maxtokens": 2000, "ollamabaseurl": "http://localhost:11434", # Ensure this URL is correct }embedder

"provider": "ollama", "config": { "model": "nomic-embed-text:latest", "embeddingdims": 768, "ollamabaseurl": "http://localhost:11434", }The OpenMemory with Ollama is a private, local-first memory server that creates a shared, persistent memory layer for your MCP-compatible tools.

This runs entirely on your machine, enabling seamless context handoff across tools. Whether you're switching between development, planning, or debugging environments, your AI assistants can access relevant memory without needing repeated instructions.

Powered by

https://mem0.ai

https://ollama.com

https://qdrant.tech/

https://tauri.app/

Update History

0.1.4 (6)13 Dec 2025, 09:47 UTC

13 Nov 2025, 11:31 UTC

20 Nov 2025, 17:08 UTC

13 Dec 2025, 09:47 UTC